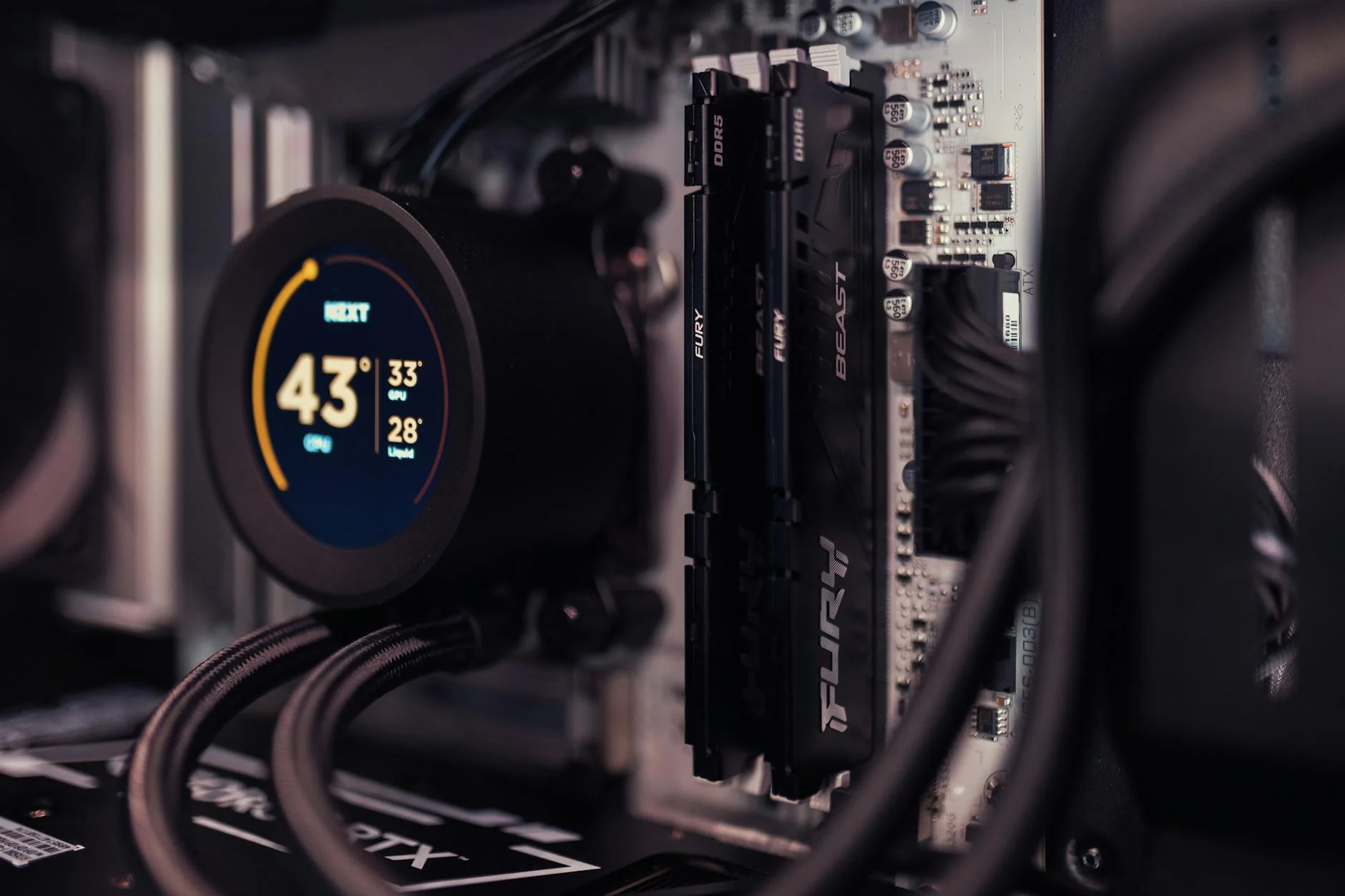

A single number—12,800 MT/s—is now the new benchmark for DDR5 memory performance, and it’s not just another spec bump. This latest JEDEC standard for MRDIMM modules represents a 45% increase over previous generations, a jump that could have ripple effects across AI-driven data centers where bandwidth has become the limiting factor in training larger models faster.

But what does this speed mean in practice? For workloads that rely on massive parallelism—like AI inference and training—the difference isn’t just about moving more data; it’s about doing so with less latency, fewer bottlenecks, and a tighter integration between memory and compute. The move to 12,800 MT/s isn’t just an upgrade; it’s a rethinking of how memory hierarchies are structured in next-gen systems.

Where This Fits in the Ecosystem

The shift to higher-speed DDR5 MRDIMM modules is part of a broader trend toward optimizing memory subsystems for AI and high-performance computing. Unlike traditional DIMMs, which were designed with a focus on general-purpose workloads, MRDIMM (Multi-Rank DIMM) was introduced to address the specific needs of data centers—higher capacity per slot, better scalability, and lower power consumption when paired with modern CPUs and GPUs.

- Key specs:

- 12,800 MT/s transfer rate (up from 8,754 MT/s in previous standards)

- Designed for multi-rank configurations to maximize bandwidth per DIMM

- Compatibility with existing DDR5 infrastructure, ensuring no immediate hardware obsolescence

The 12,800 MT/s figure isn’t just a theoretical maximum; it’s a target that manufacturers are already aiming for in pre-production modules. This means that data centers looking to future-proof their AI workloads—whether for training or inference—will need to start evaluating whether their current memory subsystems can keep up with the demands of next-gen models.

Who Benefits Most

The clear beneficiaries here are AI workloads, particularly those that rely on large-scale matrix operations. Training a deep learning model requires moving vast amounts of data between CPU and GPU, and every nanosecond saved in memory access translates to faster iterations. With 12,800 MT/s MRDIMMs, the latency gap between memory and compute narrows, which could lead to more efficient training cycles and better throughput during inference.

But the impact isn’t limited to AI. High-performance computing clusters, scientific simulations, and even next-gen gaming rigs—where memory bandwidth has become a bottleneck in rendering complex scenes—could see tangible improvements. The key takeaway is that this standard isn’t just about speed; it’s about redefining how memory and compute work together in systems where every cycle matters.

For data center operators, the question now is whether to upgrade existing infrastructure or wait for the next generation of CPUs and GPUs that are optimized for these higher-speed modules. The choice will depend on how quickly the ecosystem adopts this standard, but one thing is clear: the 12,800 MT/s mark isn’t just a milestone—it’s a turning point in how memory is designed for AI-driven workloads.