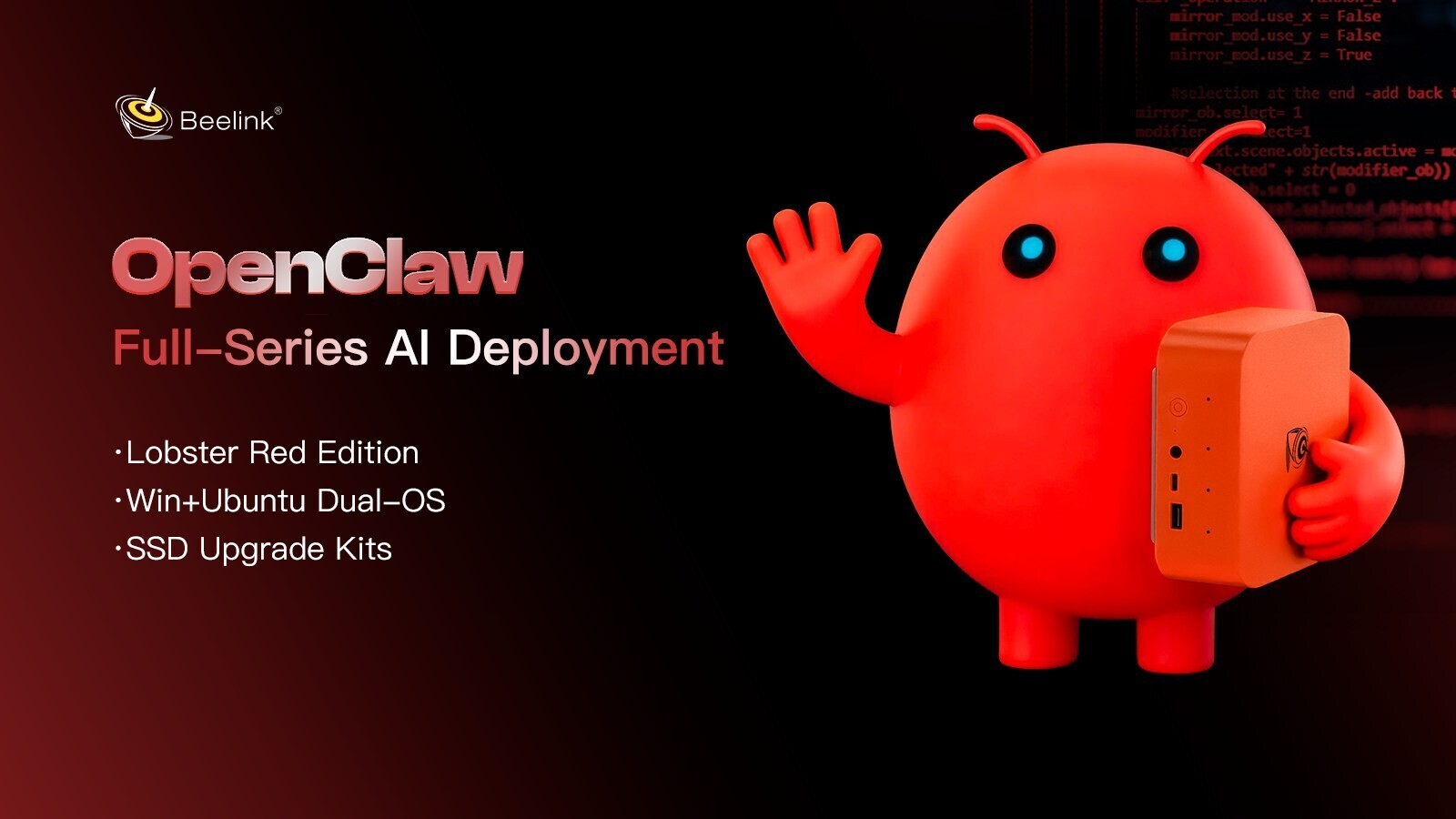

agents are transforming productivity, but many users struggle with setup and configuration. Beelink aims to simplify this with a new series of mini PCs that integrate OpenClaw directly into the hardware, reducing barriers to AI adoption.

The lineup includes models with preinstalled OpenClaw for local inference or cloud model access, as well as dual-OS options for seamless switching between Windows and Ubuntu. For those already using Beelink devices, plug-and-play SSD kits offer an upgrade path without requiring a full system replacement.

Key Features and Models

- Lobster Red Editions: All-metal chassis in an exclusive colorway, designed for AI workloads with high performance and silent operation.

- Local Inference Models (GTR9 Pro 395, SER10 MAX 470, etc.): Run inference entirely on-device with DDR5 memory and up to 24 GB of RAM, delivering ~52 tokens/sec on GPT-OSS 120B.

- Cloud Model Access (SER9 Pro 255, EQR7 Pro 7735HS, etc.): Direct API access to GPT-4o, Claude, and Gemini with flexible performance tiers.

- Dual-OS Editions: Instant switching between Windows for daily tasks and Ubuntu for AI development, consolidating multiple workflows into one device.

The new models are positioned as higher-spec alternatives to the Mac mini, offering more RAM, storage, and expandability at a comparable price point. Existing Beelink owners can upgrade via plug-and-play SSD kits in 1 TB, 2 TB, or 4 TB capacities, preloaded with OpenClaw and local LLMs where supported.

Support and Availability

All products are backed by a three-year warranty and include dedicated AI support for setup and operation. The lineup will be available soon on Beelink’s official website and Amazon.

This initiative reflects the growing demand for AI-ready hardware that balances performance, privacy, and ease of deployment without relying solely on cloud resources.