ASUS is stepping into the high-stakes world of AI data center cooling with a new Optimized Liquid-Cooling Solutions framework, designed to address the escalating thermal and power demands of next-generation computing architectures. The announcement marks a strategic expansion into liquid-cooled infrastructure, leveraging partnerships with global leaders like Schneider, Vertiv, and component specialists such as Auras Technology and Cooler Master.

The push comes as AI and high-performance computing (HPC) workloads increasingly outpace traditional air-cooling systems. ASUS’s solutions—spanning direct-to-chip (D2C), in-row CDU-based cooling, and hybrid setups—aim to reduce energy consumption, lower Power Usage Effectiveness (PUE), and enhance total cost of ownership (TCO) in ultra-dense environments.

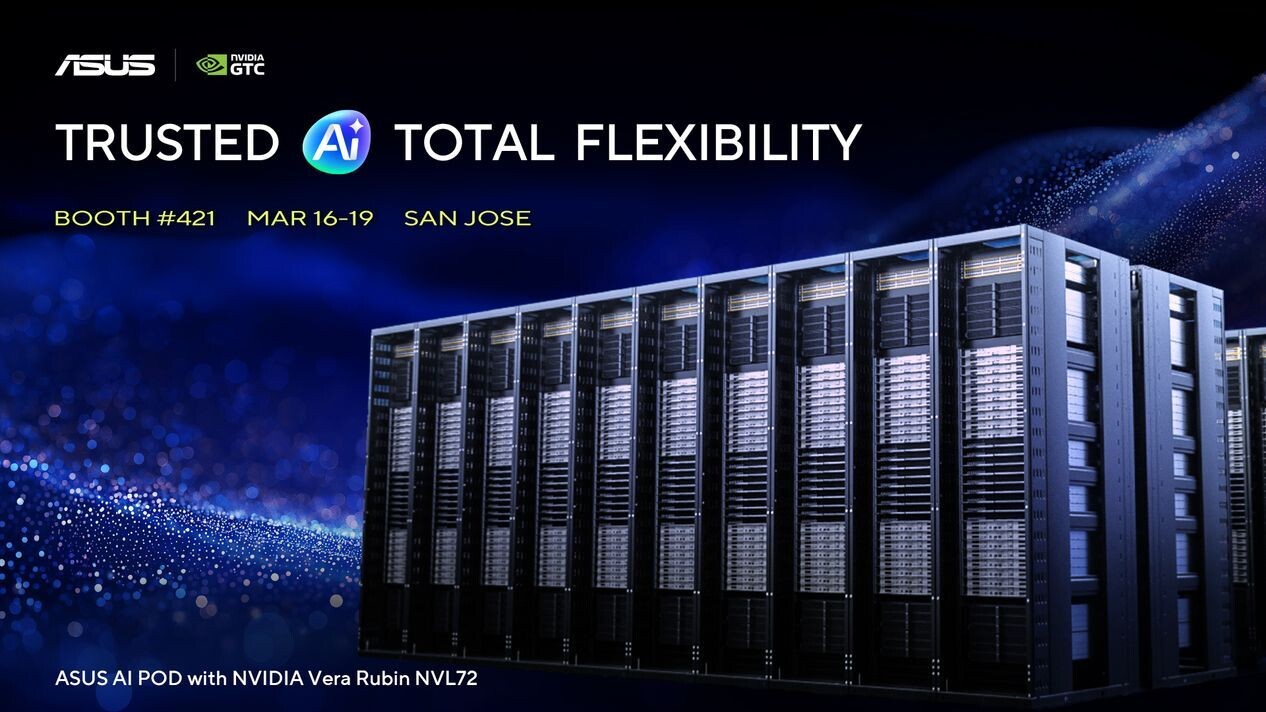

One of the most critical applications is support for NVIDIA’s Vera Rubin NVL72 systems, which rely on HBM4 memory and are expected to dominate AI supercomputing. By integrating liquid cooling into these architectures, ASUS claims to enable unprecedented rack density while maintaining stability at scale. The company points to its 2,156 SPEC CPU records and 248 MLPerf top spots as proof of its expertise in pushing real-world performance limits.

The Upside: Energy Efficiency and Scalability

A standout example of ASUS’s capabilities is its recent collaboration with the National Center for High-Performance Computing (NCHC) in Taiwan. The deployment features a dual-compute architecture combining NVIDIA’s HGX H200 and GB200 NVL72 systems—Taiwan’s first fully liquid-cooled AI supercomputer of this kind. By implementing direct liquid-cooling (DLC), ASUS achieved a PUE of just 1.18, a benchmark for sustainable high-performance computing.

The framework also includes precision components from industry partners, ensuring compatibility with high-power GPUs like the upcoming GeForce RTX 50-series (including models like the RTX 5070 Ti, RTX 5060 Ti, and the rumored $5,000 RTX 5090). While ASUS’s focus is primarily on data center-grade solutions, the underlying cooling technologies may indirectly influence consumer and workstation markets, particularly as power demands rise.

Limitations and Uncertainties

Despite the promise, challenges remain. Liquid-cooling solutions require significant upfront investment in infrastructure, and adoption will depend on cost-effectiveness at scale. The 250W power delivery proposed by ASUS for certain PCIe slots suggests a focus on high-end GPUs, but mainstream adoption may lag until pricing aligns with traditional cooling methods.

Additionally, the CES 2026 timeline hints at future consumer applications, but ASUS has not yet detailed how these data center innovations will trickle down. For now, the emphasis remains on enterprise and AI workloads, where thermal efficiency is non-negotiable.

What’s Next?

ASUS will showcase its liquid-cooling ecosystem at NVIDIA GTC 2026 (March 16–19 in San Jose) under the theme Trusted AI, Total Flexibility. The event will likely provide deeper insights into real-world deployments, including the NCHC supercomputer, and how these solutions integrate with NVIDIA’s Vera Rubin architecture.

The company’s move underscores a broader industry shift toward liquid cooling as AI and HPC systems push beyond the limits of air-based thermal management. For data center operators, the question is no longer if* but when* liquid cooling becomes essential—and ASUS is positioning itself as a key enabler of that transition.