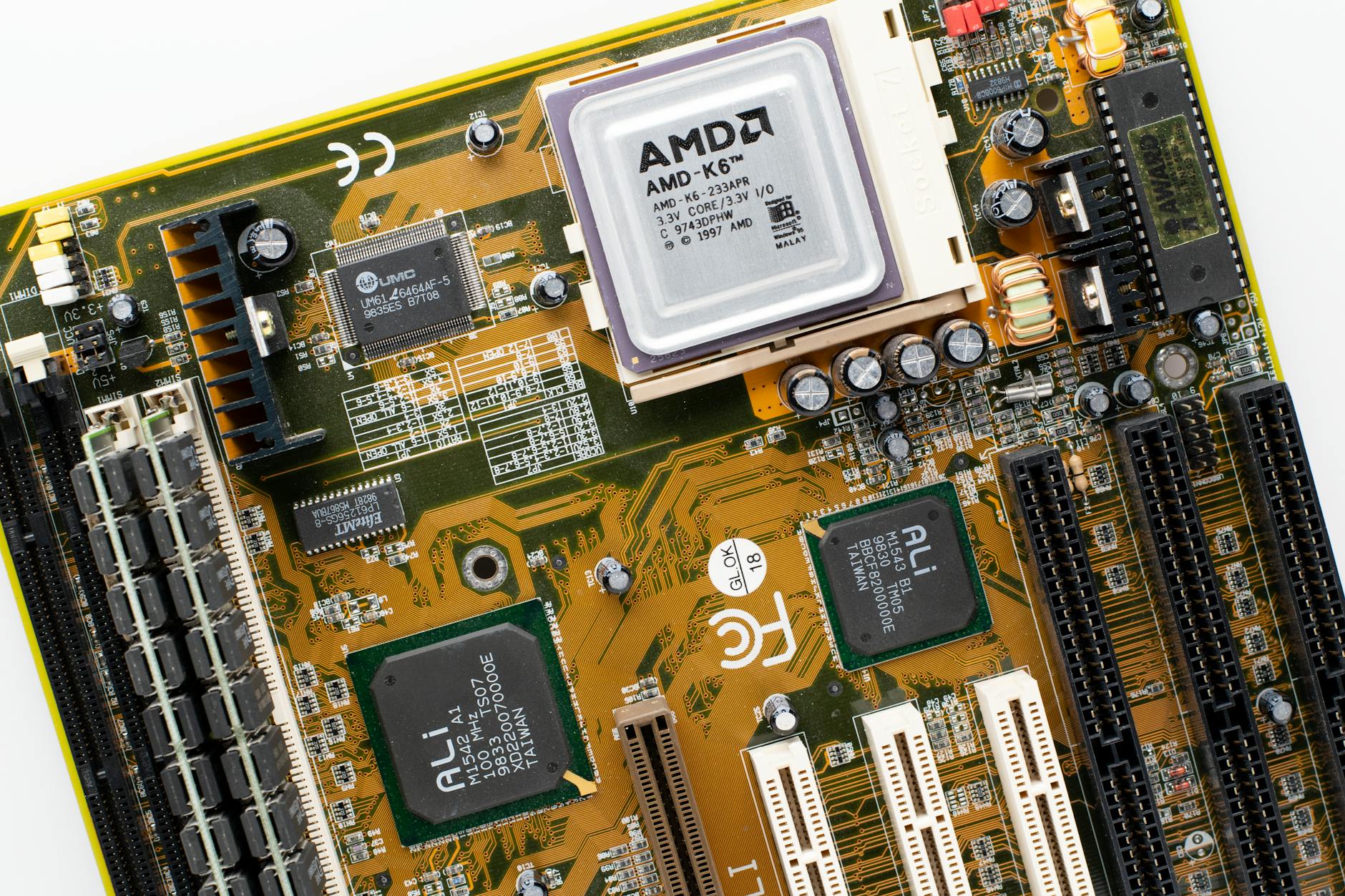

Anthropic is expanding its AI training capacity with a fresh injection of 220,000 NVIDIA GPUs, reinforcing its position at the forefront of large-language-model development. While the exact model remains unspecified, industry sources suggest these are H100 or H200 accelerators—NVIDIA’s latest chips designed for high-performance AI workloads.

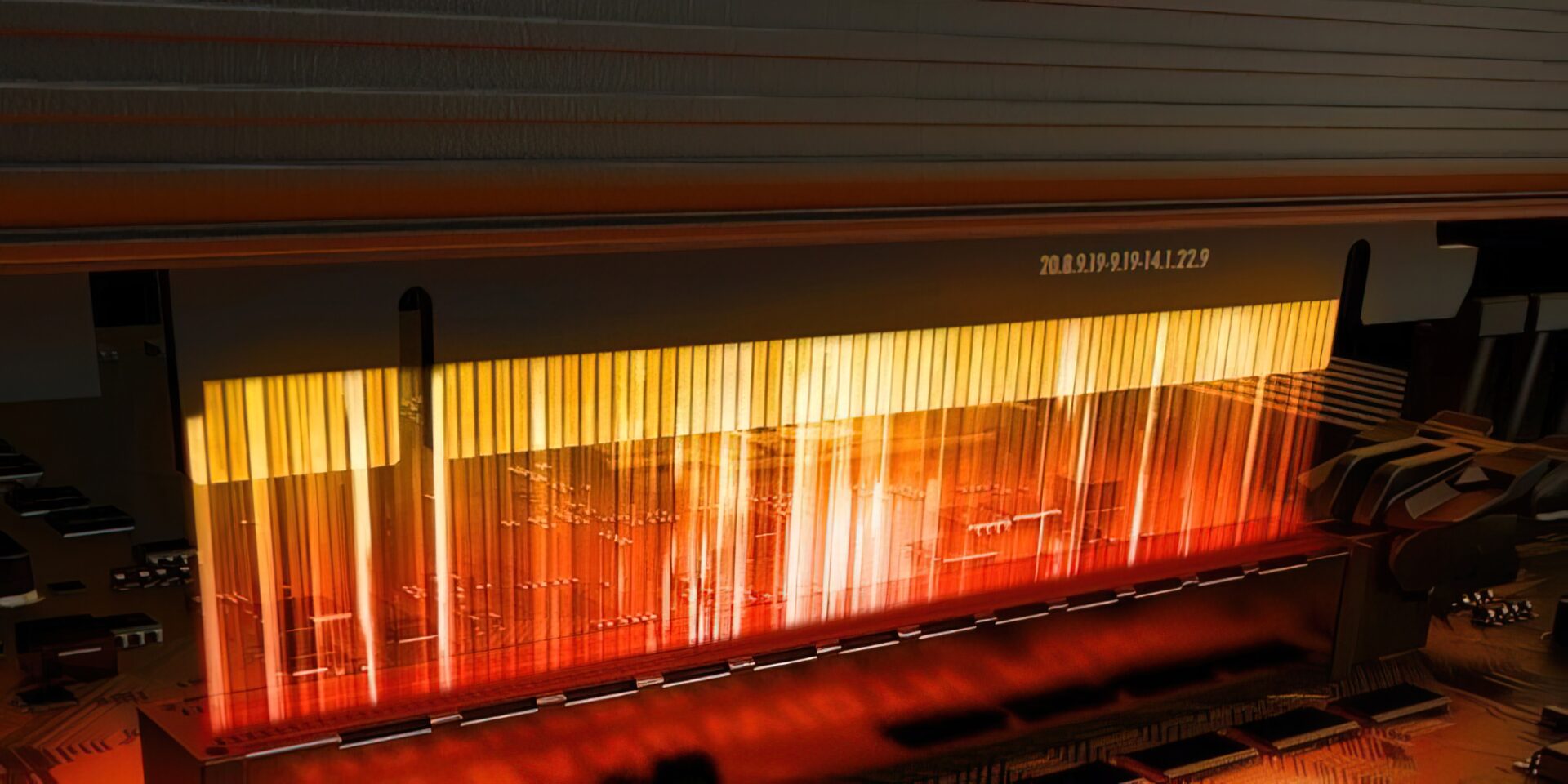

This isn’t just a numbers game; it’s about operational efficiency. Anthropic’s internal benchmarks indicate that each of these GPUs will handle more than 500 terabytes of data per day, pushing the boundaries of what’s feasible in a single training run. The company is also reportedly eyeing orbital-scale compute infrastructure, aiming to distribute AI workloads across satellite networks—though no concrete timelines or technical details have been disclosed.

Specs and Scale

- GPU Type: Likely H100/H200 (NVIDIA’s latest AI-optimized accelerators)

- Quantity: 220,000 units (in addition to existing inventory)

- Data Throughput: Estimated 500+ TB/day per GPU

- Orbital Ambitions: Multi-gigawatt compute capacity under development

The immediate impact is clear: Anthropic’s training clusters will now process data at a scale that rivals—or surpasses—some of the largest supercomputers in operation today. That’s the upside—but here’s the catch: sustaining this level of performance requires not just hardware, but also breakthroughs in software optimization and energy management.

Enterprises eyeing Anthropic’s models will need to weigh two factors: cost and availability. The 220,000-GPU order suggests a long-term commitment, meaning access to these capabilities won’t be immediate. Additionally, if orbital compute becomes a reality, the infrastructure costs could redefine what ‘enterprise-grade’ AI means—potentially pushing operational expenses beyond current benchmarks.

What’s Next?

The next phase hinges on three variables: when Anthropic will integrate these GPUs into production, whether orbital compute remains a theoretical concept or moves toward prototyping, and how pricing structures adapt to this new scale. For now, the focus is on scaling horizontally—more GPUs, more data, more models—but the real test lies in whether this expansion translates to tangible improvements for end users.