When discussions turn to AI advancements, the spotlight often falls on faster processors or more powerful GPUs. However, behind the scenes, memory technology plays an equally critical role in determining how efficiently these systems operate. Applied Materials and Micron are joining forces to tackle this challenge, aiming to push the boundaries of energy-efficient memory solutions for AI workloads.

One might assume that such a partnership would lead to immediate leaps in consumer hardware, such as faster GPUs or more capable storage devices. While these outcomes are possible in the long run, the near-term impact is likely to be more nuanced and focused on optimizing performance-per-watt rather than raw speed. The collaboration is expected to leverage Applied Materials' new $5 billion EPIC Center in Silicon Valley, designed to accelerate the transition from research breakthroughs to mass production, combined with Micron's innovation hub in Boise, Idaho. This dual-coast approach aims to create a robust pipeline for memory technology advancements.

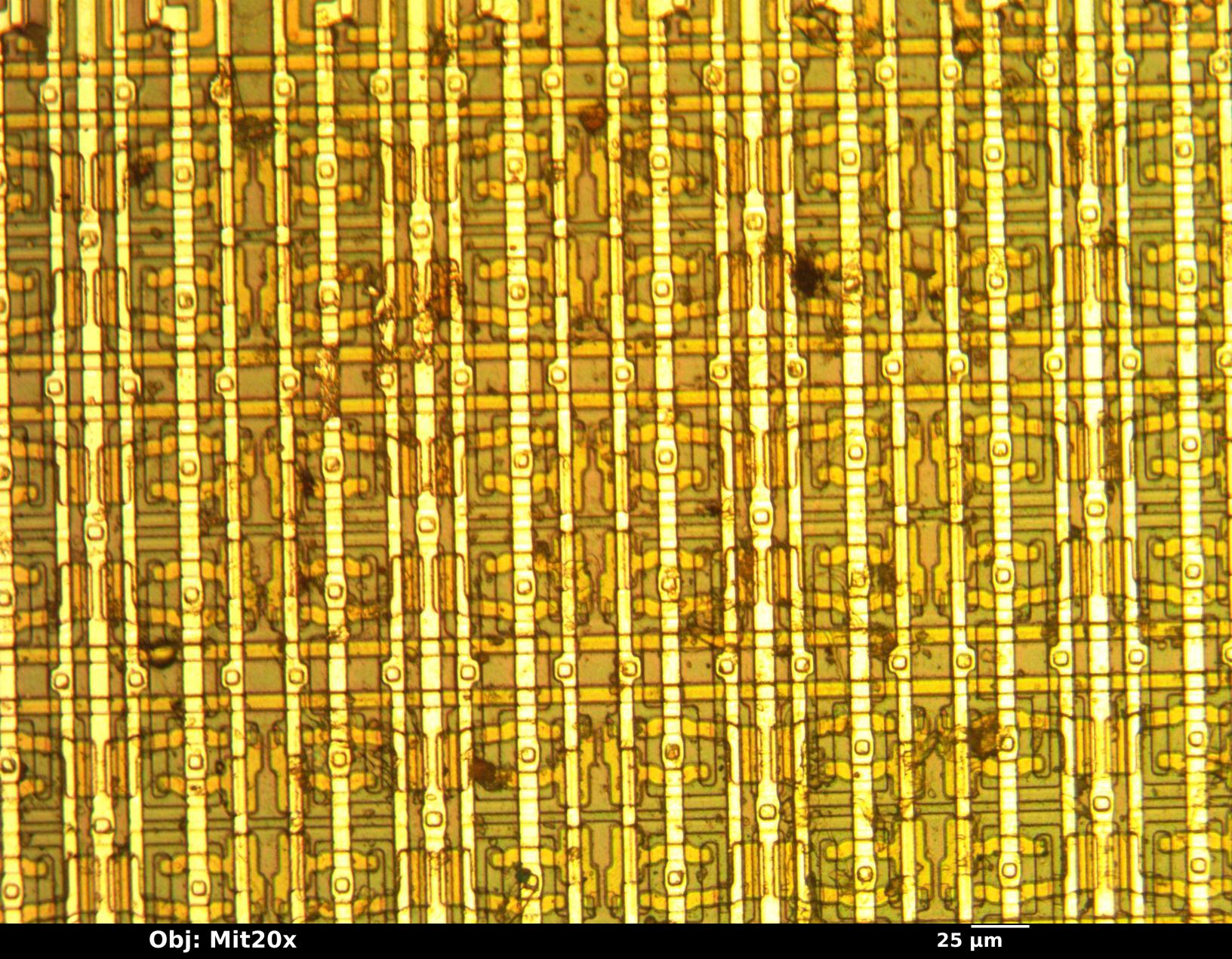

The partnership's primary focus is on next-generation DRAM, high-bandwidth memory (HBM), and NAND solutions tailored specifically for AI workloads. While these terms might not immediately resonate with the average consumer, their implications are profound. For instance, improvements in HBM could lead to more efficient data handling in GPUs, such as AMD's upcoming RDNA 5 architecture, which is rumored to feature a 384-bit memory bus for higher bandwidth demands. This would translate to lower power consumption and better thermal management, particularly in data centers and edge devices.

The practical benefits of this collaboration could start appearing within the next 12-18 months, with enterprise buyers potentially seeing noticeable improvements in their operations. For example, a data center running AI inference tasks might experience reduced power bills without sacrificing throughput. Similarly, laptops equipped with integrated HBM could handle complex models more smoothly while maintaining cooler temperatures under load. However, the extent to which these advancements will filter down to consumer products remains uncertain.

The success of this partnership hinges on its ability to bridge the gap between lab-scale breakthroughs and manufacturing at scale—a challenge that has proven difficult even for well-funded R&D efforts. If successful, AI systems could see a significant improvement in performance-per-watt, potentially reshaping the landscape of data center operations and edge device efficiency. Yet, without concrete timelines or benchmarks, the real test will be observing how quickly these innovations become tangible benefits for early adopters.

In the meantime, consumers can expect to hear more about memory efficiency as a key differentiator in AI systems, even if the changes are not immediately visible or dramatic. The focus on power-efficient solutions reflects a broader industry trend towards sustainability and performance optimization, which will likely become increasingly prominent in the coming years.