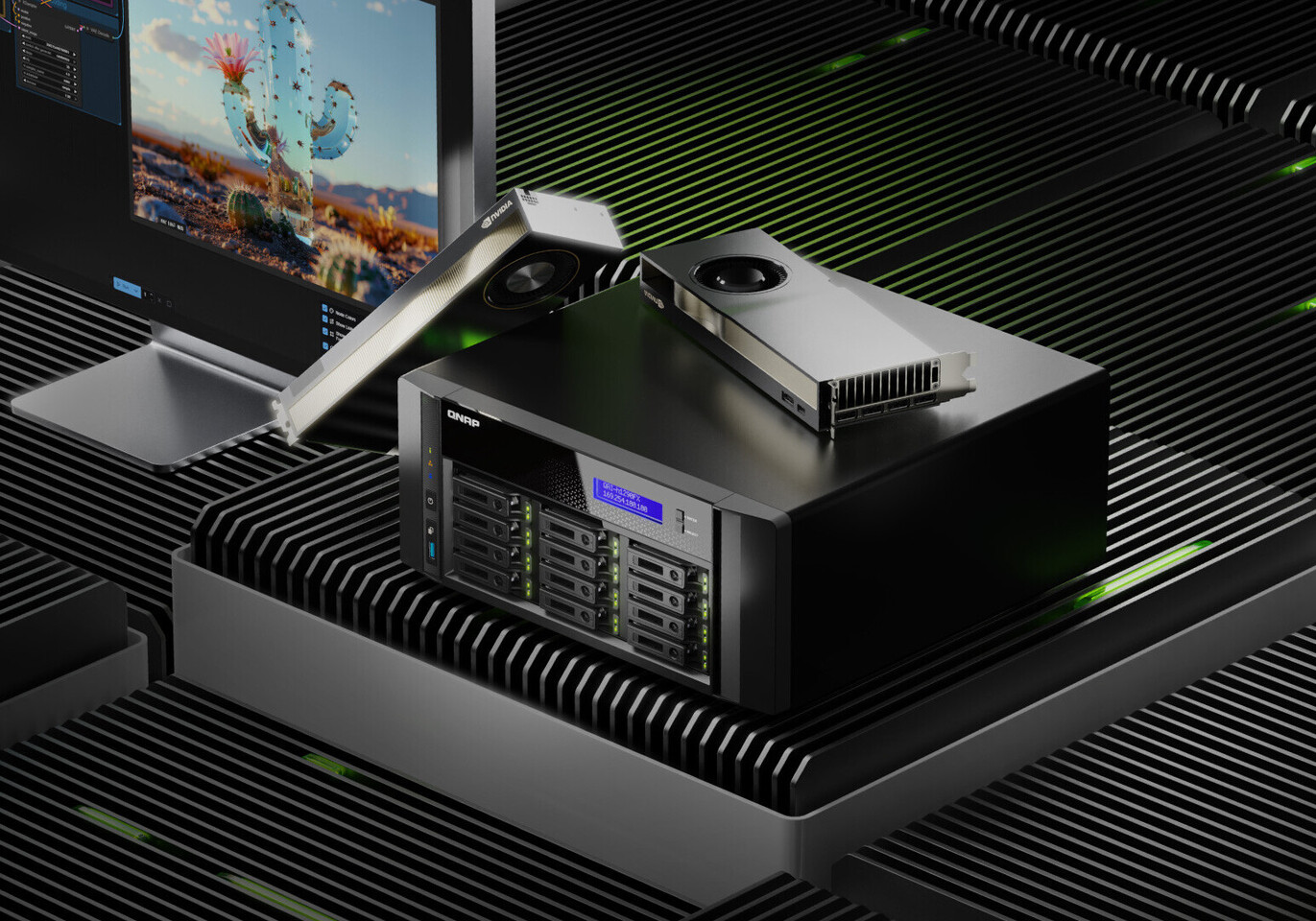

The push for self-hosted artificial intelligence is accelerating as enterprises prioritize data sovereignty and performance. QNAP has introduced the QAI-h1290FX, a purpose-built edge AI storage server designed to run large language models (LLMs), Retrieval-Augmented Generation pipelines, and generative AI applications entirely on-premises. This marks a shift toward infrastructure that eliminates cloud dependency while maintaining enterprise-grade performance.

The QAI-h1290FX leverages AMD EPYC 7302P server-class processors with 16 cores (32 threads) paired with support for NVIDIA RTX PRO 6000 Blackwell Max-Q GPUs, delivering up to 96 GB of GPU memory. This configuration is optimized for AI inference, virtualization, and high-frequency data streaming—key requirements for organizations deploying LLMs at the edge.

What’s New in the QAI-h1290FX

- All-Flash Storage Architecture: Twelve U.2 NVMe/SATA SSD slots provide ultra-fast I/O, critical for real-time AI model execution and data processing.

- GPU-Accelerated Compute: Supports optional NVIDIA RTX PRO 6000 Blackwell Max-Q GPUs with CUDA, TensorRT, and Transformer Engine acceleration—significantly boosting performance for LLM inference, image generation, and deep learning.

- Containerized AI Environment: Built-in support for Docker and LXD with intuitive GPU resource management, allowing users to deploy AI tools via a preloaded app center without complex command-line configuration.

- Zero Cloud Dependency: Designed for fully on-premises deployment of AI-powered chat interfaces, RAG search engines, and knowledge bases—ensuring data remains in-house while maintaining high-speed performance.

The server runs QNAP’s ZFS-based QuTS hero operating system, delivering enterprise-grade data integrity, inline deduplication, and near-limitless snapshots. It also supports native GPU access in containers through Container Station and GPU passthrough for virtual machines via Virtualization Station—enabling seamless integration into existing IT workflows.

Why This Matters

The QAI-h1290FX addresses a growing need for enterprises to deploy AI models without relying on cloud providers, offering full control over performance, resource allocation, and data privacy. Preloaded AI tools—including AnythingLLM, OpenWebUI, Ollama, Stable Diffusion, ComfyUI, n8n, and vLLM—allow users to rapidly build private LLM workflows while maintaining operational efficiency.

This is more than just a storage server; it’s a complete platform for AI at the edge. Organizations can deploy conversational AI interfaces for knowledge lookup, enterprise RAG search pipelines for internal documents, or AI-driven automation tools—all without compromising on speed or compliance.

Next Steps

The QAI-h1290FX is positioned as a practical solution for legal teams, HR departments, creative workflows, and IT operations looking to integrate generative AI while staying compliant with data residency regulations. With dual 25GbE networking, PCIe slots for optional 100GbE upgrades, and compatibility with QNAP JBOD expansion enclosures, the system scales to meet large-scale AI storage demands.

For developers and IT teams, this represents a significant step forward in on-premises AI deployment. The combination of server-grade processing, GPU acceleration, and preconfigured AI tools eliminates much of the complexity traditionally associated with building an edge AI infrastructure—allowing organizations to focus on model training and application development rather than hardware setup.

The QAI-h1290FX is now available for order, reinforcing QNAP’s commitment to delivering high-performance, scalable solutions that meet the evolving needs of modern AI workloads.