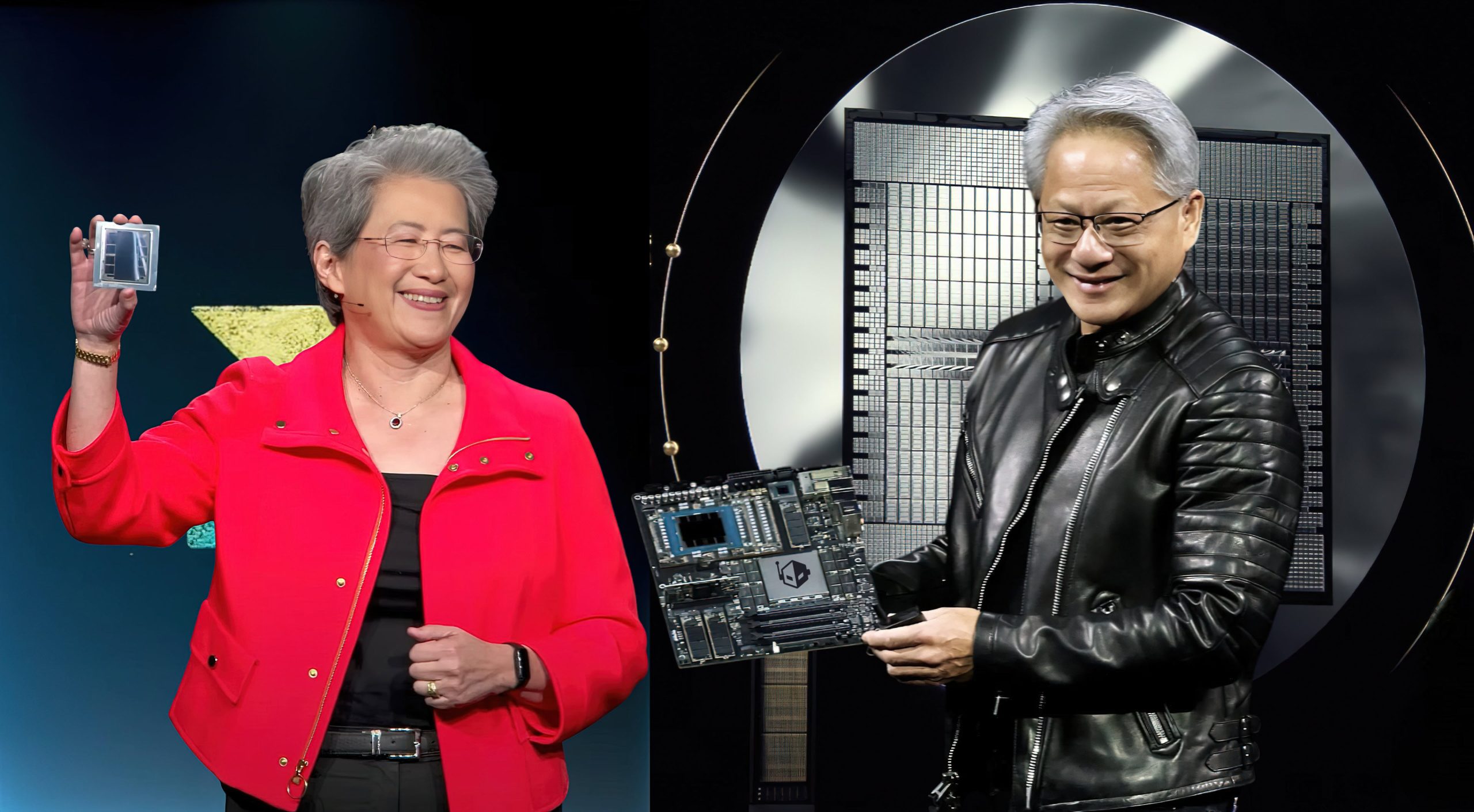

NVIDIA's Vera Rubin AI platform has undergone substantial revisions, particularly in memory bandwidth, as the company seeks to maintain its competitive edge against AMD's Instinct MI455X. This strategic move comes at a time when agentic AI systems are demanding higher performance, prompting NVIDIA to push the boundaries of its specifications.

The Vera Rubin NVL72, initially announced with 13TB/s memory bandwidth, has seen a dramatic increase to 22.2TB/s. This upgrade, revealed at CES 2026, marks a 10% advantage over AMD's MI455X, which offers 19.6TB/s. The shift in specifications is not merely incremental; it reflects a broader trend where memory bandwidth has become a critical factor in the performance of AI infrastructure.

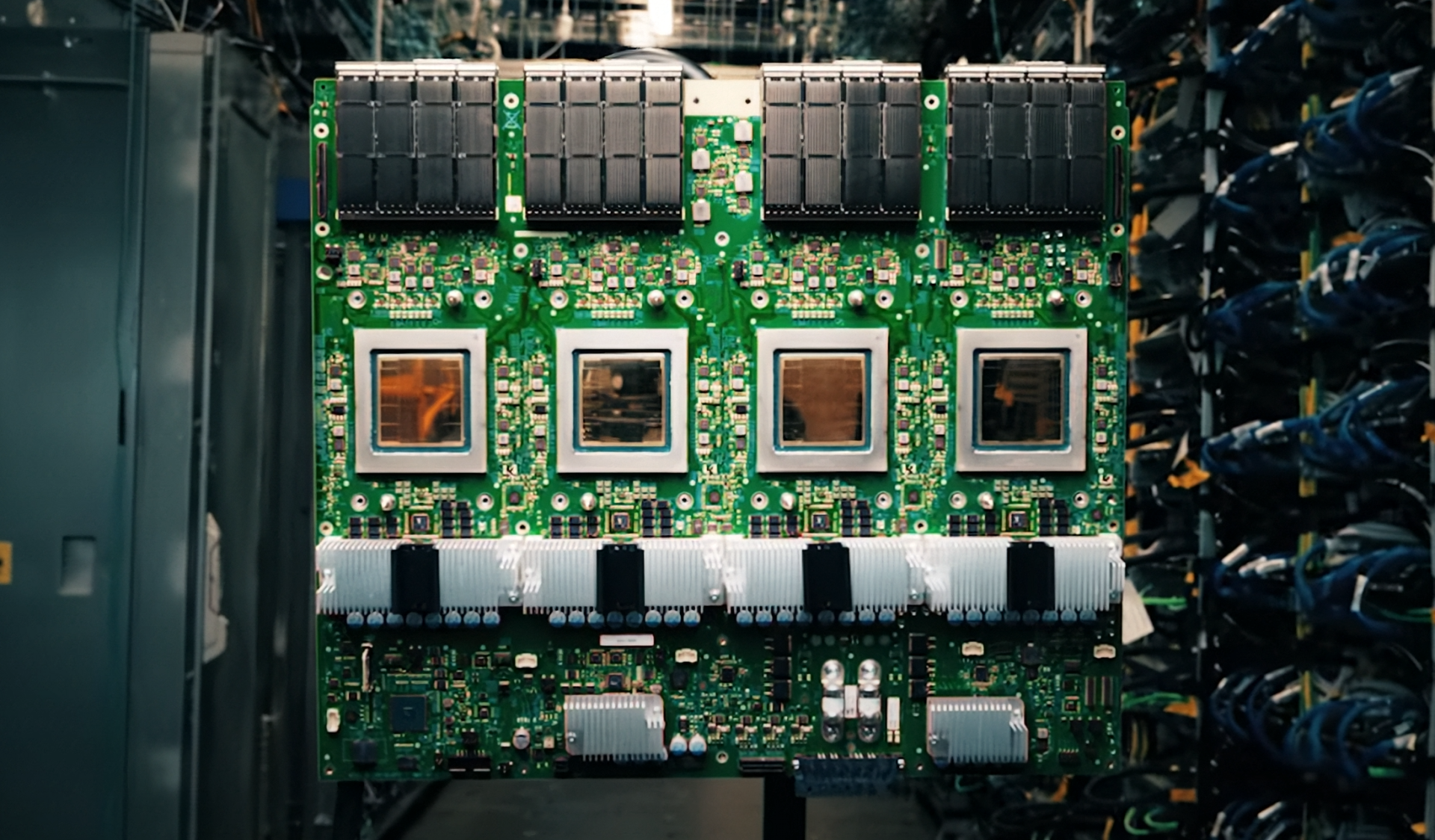

NVIDIA's approach to achieving this bandwidth involves pushing HBM4 specifications beyond standard JEDEC ratings, with pin speeds reaching up to 11 Gbps. This strategy contrasts with AMD's use of 12-Hi HBM4 stacks, which have traditionally provided higher bandwidth. By employing an 8-stack interface, NVIDIA is able to overclock pin speeds effectively, thereby gaining a competitive advantage in memory performance.

The implications of these upgrades extend beyond raw numbers. The increased memory bandwidth is designed to cater to the demands of agentic AI systems, which are expected to dominate this year's market. This shift highlights the evolving landscape of AI infrastructure, where memory capacity and bandwidth are becoming as crucial as computational power itself. As hyperscalers evaluate their options, the competition between NVIDIA and AMD is likely to intensify, potentially leading to a significant shift in market dynamics.

The Vera Rubin platform's advancements also underscore NVIDIA's commitment to maintaining its lead in hyperscaler adoption. With the AI market expanding rapidly, the company's focus on pushing the limits of memory bandwidth reflects its strategy to stay ahead of the curve. This aggressive approach is expected to influence the development and deployment of next-generation AI systems, setting a new benchmark for performance and efficiency.

- Key Specifications:

- Memory Bandwidth: 22.2TB/s (Vera Rubin NVL72) vs. 19.6TB/s (AMD Instinct MI455X)

- HBM4 Stacks: NVIDIA uses an 8-stack interface with pin speeds up to 11 Gbps, while AMD employs 12-Hi HBM4 stacks

- Performance Impact: The increased bandwidth is targeted at agentic AI systems, which are expected to drive market demand this year

The Vera Rubin platform's upgrades also highlight the importance of memory technology in modern AI infrastructure. While computational power remains a key factor, the ability to process and transfer data efficiently has become equally critical. This balance between compute and memory is likely to shape the future of AI development, with both NVIDIA and AMD vying for dominance in this rapidly evolving market.

As the competition between NVIDIA and AMD continues, the focus on memory bandwidth and other performance metrics will remain a defining aspect of the AI infrastructure landscape. The Vera Rubin platform's advancements are a clear indication that NVIDIA is not willing to cede its leadership position, even in the face of growing pressure from competitors. This strategic move could set the stage for a new era of high-performance AI systems, characterized by unprecedented levels of efficiency and capability.