NVIDIA’s upcoming Rubin platform, designed to power the next wave of AI infrastructure, is entering a critical phase: the validation of HBM4 memory from its three primary suppliers. Samsung, SK hynix, and Micron are all expected to finalize HBM4 certification by the second quarter of 2026, setting the stage for mass production—but the process comes with challenges.

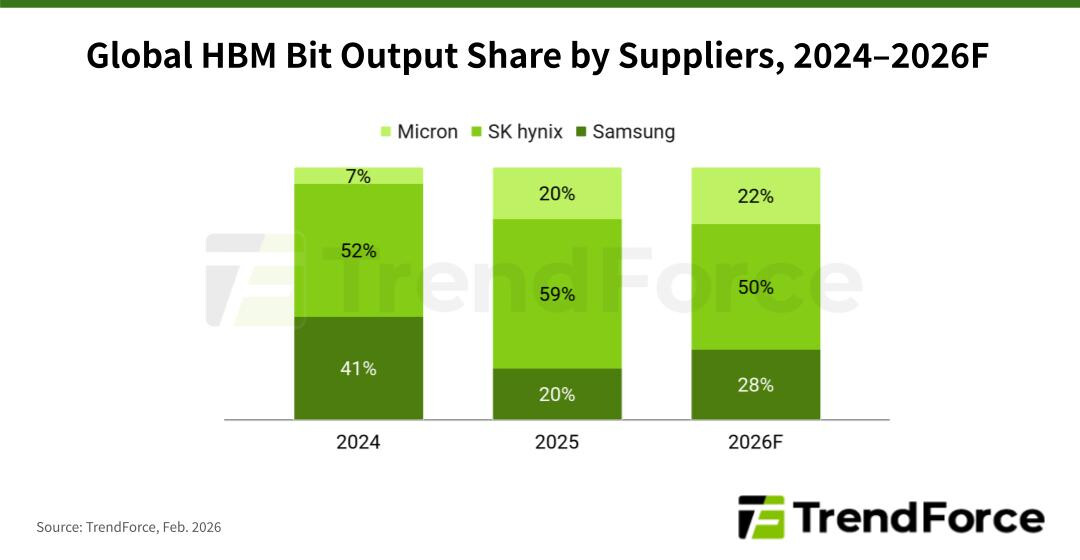

The Rubin platform, which will underpin future GPUs like the rumored RTX 6000 series, demands a stable supply of HBM4, a high-bandwidth memory solution critical for AI workloads. TrendForce’s latest analysis suggests Samsung is poised to lead validation efforts, followed closely by SK hynix and Micron. However, the broader memory market—particularly the surge in DDR6 demand—is forcing suppliers to recalibrate production priorities, potentially creating delays.

While NVIDIA’s Rubin architecture is expected to accelerate AI server deployments, the company’s reliance on a diversified HBM4 supply chain reflects growing concerns over single-supplier risks. If any vendor falls behind, it could hinder the platform’s ramp-up, particularly as cloud service providers (CSPs) in North America ramp up AI agent infrastructure.

What This Means for AI Infrastructure

The Rubin platform’s success depends on HBM4’s ability to meet the demands of inference-heavy AI workloads. Unlike previous generations, HBM4’s adoption is being driven by real-time AI applications, where latency and bandwidth are non-negotiable. With CSPs like Microsoft, Google, and Amazon accelerating AI server deployments, NVIDIA’s HBM4 supply chain must scale quickly—or risk bottlenecking the entire ecosystem.

Samsung’s early validation advantage could give it a head start in bit allocation, but SK hynix and Micron are not far behind. Micron, in particular, has faced slower progress due to its broader memory portfolio, but its completion by mid-2026 would ensure NVIDIA maintains a multi-supplier strategy—a move that aligns with recent shifts in the industry.

Supply Chain Tensions and DRAM Competition

The memory shortage that has plagued the tech industry for years is now spilling into HBM production. With DDR6 prices surging since late 2025, suppliers are being forced to allocate capacity between high-demand DRAM and HBM4. This balancing act could delay HBM4’s commercial rollout, as vendors prioritize revenue from more immediate markets.

For NVIDIA, this means a cautious approach to Rubin’s production timeline. While the company has expressed optimism about AI-driven demand, the lack of a single dominant HBM4 supplier could slow down scaling. If Samsung, SK hynix, and Micron all succeed in validation, NVIDIA may distribute orders across all three—but if one supplier lags, the platform’s launch could be pushed back.

Looking Ahead: Rubin and Beyond

Beyond Rubin, the HBM4 ecosystem will play a key role in future GPU architectures, including potential successors to the RTX 50 series. With rumors of an RTX 6000 series emerging as early as CES 2026—and reports of a $5,000 RTX 5090 due to AI-driven pricing—NVIDIA’s ability to secure stable HBM4 supply will determine how quickly these products reach the market.

For data center administrators, the Rubin platform’s arrival could mean significant upgrades in AI training and inference capabilities. However, the transition to HBM4-based systems will require careful planning, as compatibility and driver support may lag behind hardware availability. Meanwhile, the broader memory crunch suggests that HBM4’s profitability—historically a strong selling point—may face new scrutiny as suppliers weigh its long-term ROI against immediate DRAM gains.

The race for HBM4 certification is now in its final stretch. If all three suppliers meet their 2Q26 targets, NVIDIA’s Rubin platform could enter mass production with a stable supply chain. But if delays emerge, the AI infrastructure boom of 2026 may face unexpected headwinds.