NVIDIA has announced the Jetson Thor platform, a new solution designed to deliver large language model capabilities directly to edge devices. Unlike traditional AI setups that rely on cloud connectivity, Jetson Thor operates independently, offering low-latency processing for industrial applications where real-time performance is essential.

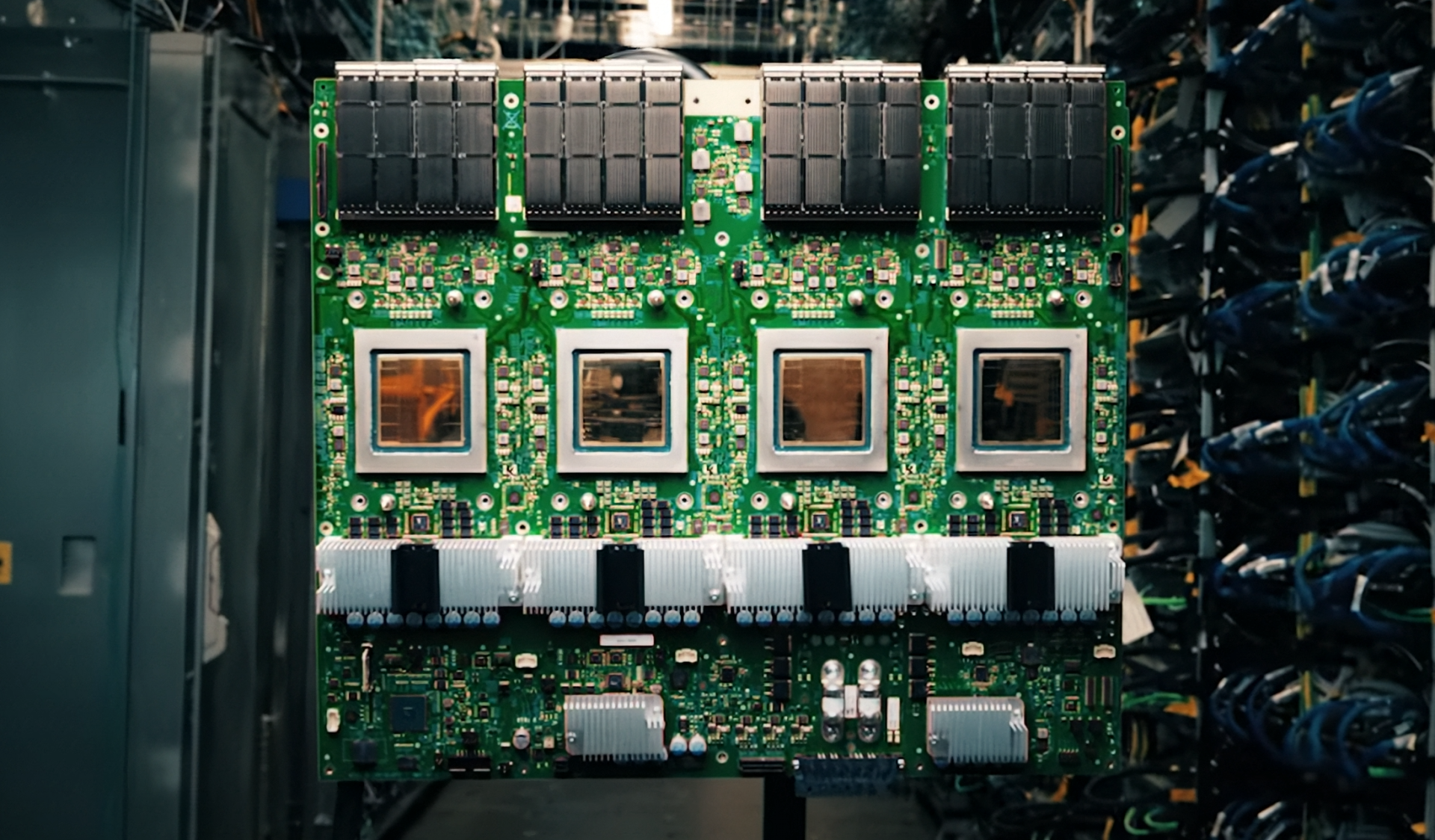

The platform integrates compute and memory into a single system-on-module, addressing current industry challenges with component shortages. This design simplifies hardware development while maintaining efficiency in power consumption—critical factors for edge deployments.

Jetson Thor supports open AI models including Qwen3 4B, GR00T N1.6, and others optimized for vLLM, Llama.cpp, and SGLang frameworks. Performance metrics show the platform can process up to 273 tokens per second concurrently, making it suitable for complex industrial tasks.

Early demonstrations at CES showcased practical applications: Caterpillar integrated Jetson Thor into a compact excavator cab, enabling voice-guided operator assistance with NVIDIA Nemotron speech models. Franka Robotics used the platform to power dual-arm robots performing perception and motion tasks without cloud dependency.

Research applications are also emerging. NYU's Center for Robotics and Embodied Intelligence has leveraged Jetson Thor to enhance humanoid robot dexterity in pick-and-place operations, while independent developers have built AI assistants on Jetson AGX Orin for local task management.

The platform's 50 Hz processing capability ensures smooth operation in dynamic environments. While availability details remain pending, NVIDIA is expected to provide updates at GTC 2026. Jetson Thor represents a significant advancement in edge AI deployment, addressing memory constraints and power efficiency while maintaining performance parity with cloud-based solutions.