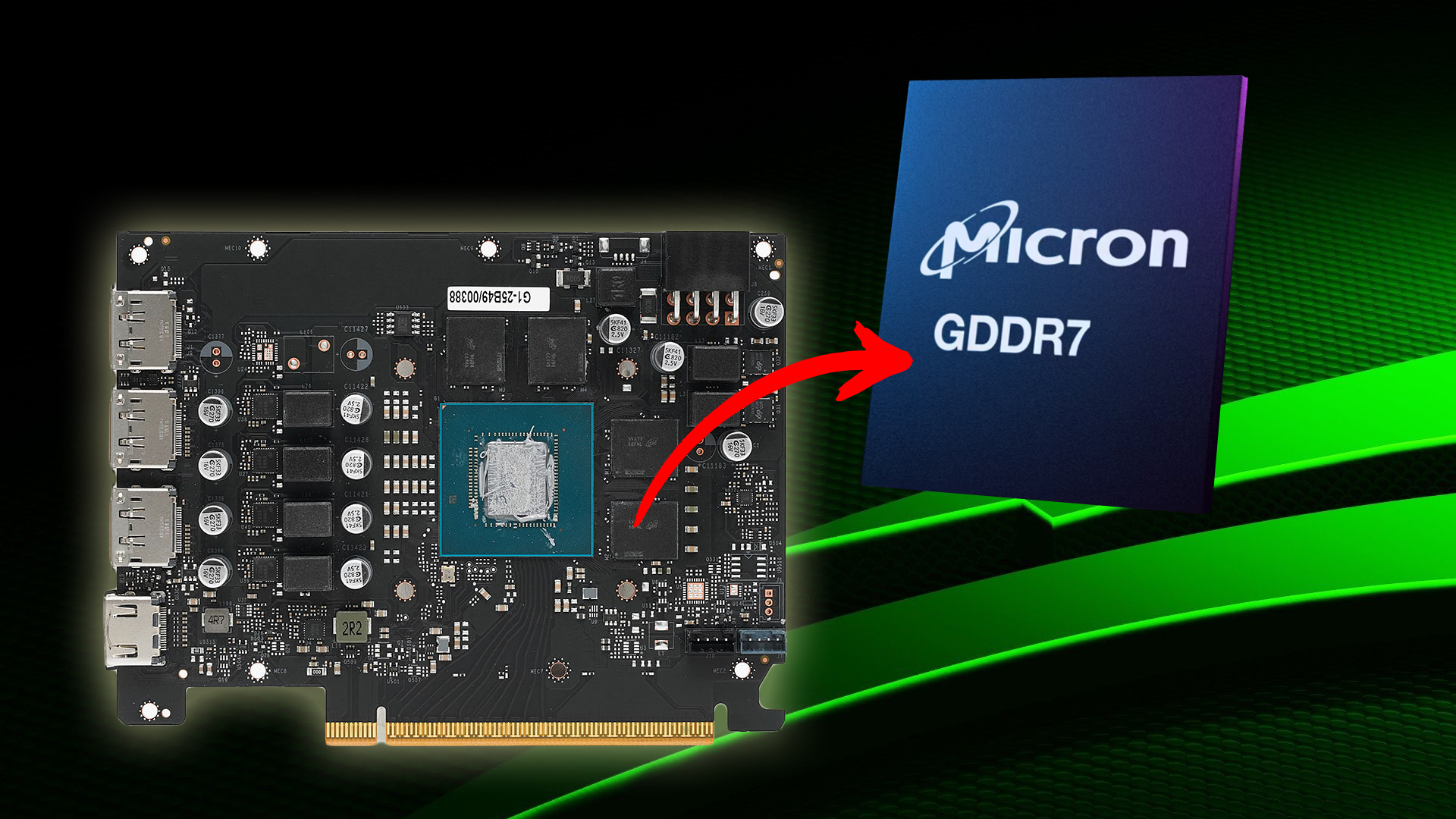

NVIDIA’s inclusion of Micron GDDR7 memory in its RTX 50 series marks a notable pivot in GPU memory supply chains, one that could reshape how IT teams approach hardware procurement and future-proofing. This shift, while addressing immediate shortages, also raises questions about the long-term implications for performance benchmarks and ecosystem stability.

Until now, NVIDIA has largely relied on Samsung and SK Hynix for GDDR7 modules in the RTX 50 lineup, with the RTX 5080 standing out as the sole exception by using 30 Gbps memory. The rest—including the RTX 5060—operate at 28 Gbps. The appearance of Micron’s MT68A512M32DF, a 2 GB module rated for 28 Gbps, suggests NVIDIA is diversifying its supply base under pressure from ongoing DRAM shortages. This isn’t the first time Micron has supplied memory to NVIDIA; however, its role in the RTX 50 series is a clear indicator of how tightly constrained the market remains.

Micron’s entry into this space also highlights the company’s preparation for sustained demand. Earlier this year, Micron confirmed its own 24 GB GDDR7 modules, capable of 36 Gbps speeds—specs that don’t align with the current RTX 50 series but could hint at future GPU architectures. For IT teams, this means watching how NVIDIA balances memory efficiency with performance in upcoming models, particularly as prices for existing GPUs have already climbed by at least 15% since October 2025 and show no signs of stabilizing.

- Key Specs:

- Micron GDDR7 MT68A512M32DF (2 GB, 28 Gbps) used in RTX 5060

- RTX 5080 remains the only model with 30 Gbps memory

- Micron’s 24 GB GDDR7 modules (36 Gbps) not yet integrated into RTX 50 series

The practical impact for IT teams lies in two areas: immediate availability and long-term planning. On the one hand, diversifying suppliers may ease short-term shortages, but it also introduces variables in memory consistency and performance tuning. On the other, Micron’s higher-speed modules suggest NVIDIA is laying groundwork for future architectures, possibly including the rumored Rubin series. The challenge will be determining whether this shift translates to tangible improvements—or simply a more fragmented ecosystem.

The broader trend here is clear: as AI workloads and efficiency demands grow, GPU memory sourcing has become a critical battleground. NVIDIA’s move to Micron reflects not just a supply-side solution but a strategic adjustment in how it navigates an increasingly competitive and constrained market. For IT teams, the takeaway is straightforward: expect continued volatility in pricing and performance until the next generation of GPUs solidifies its footprint.