NVIDIA and T-Mobile have announced a partnership to integrate AI applications directly into mobile network infrastructure, with an emphasis on real-world physical AI tasks such as robotics and autonomous systems.

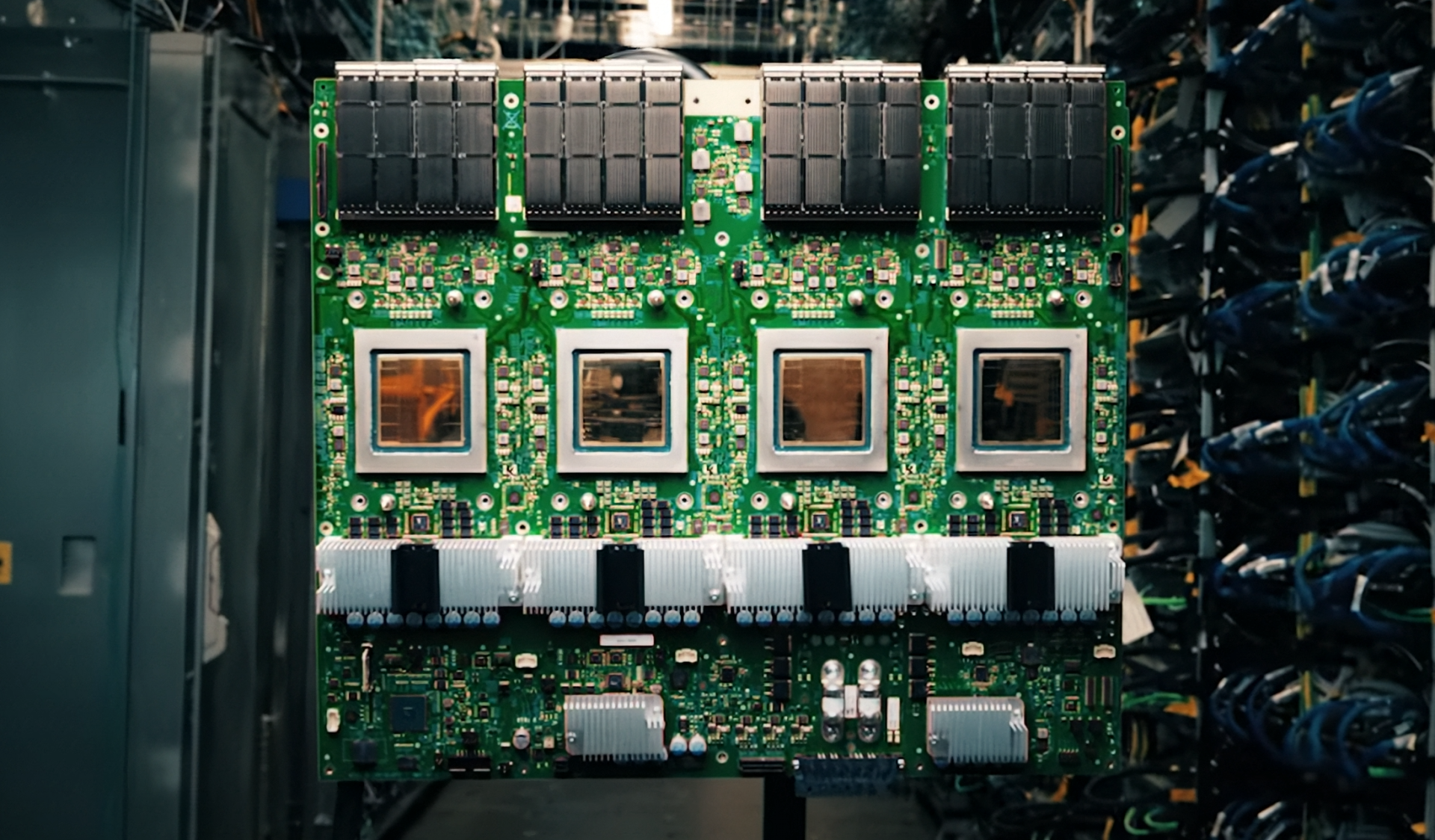

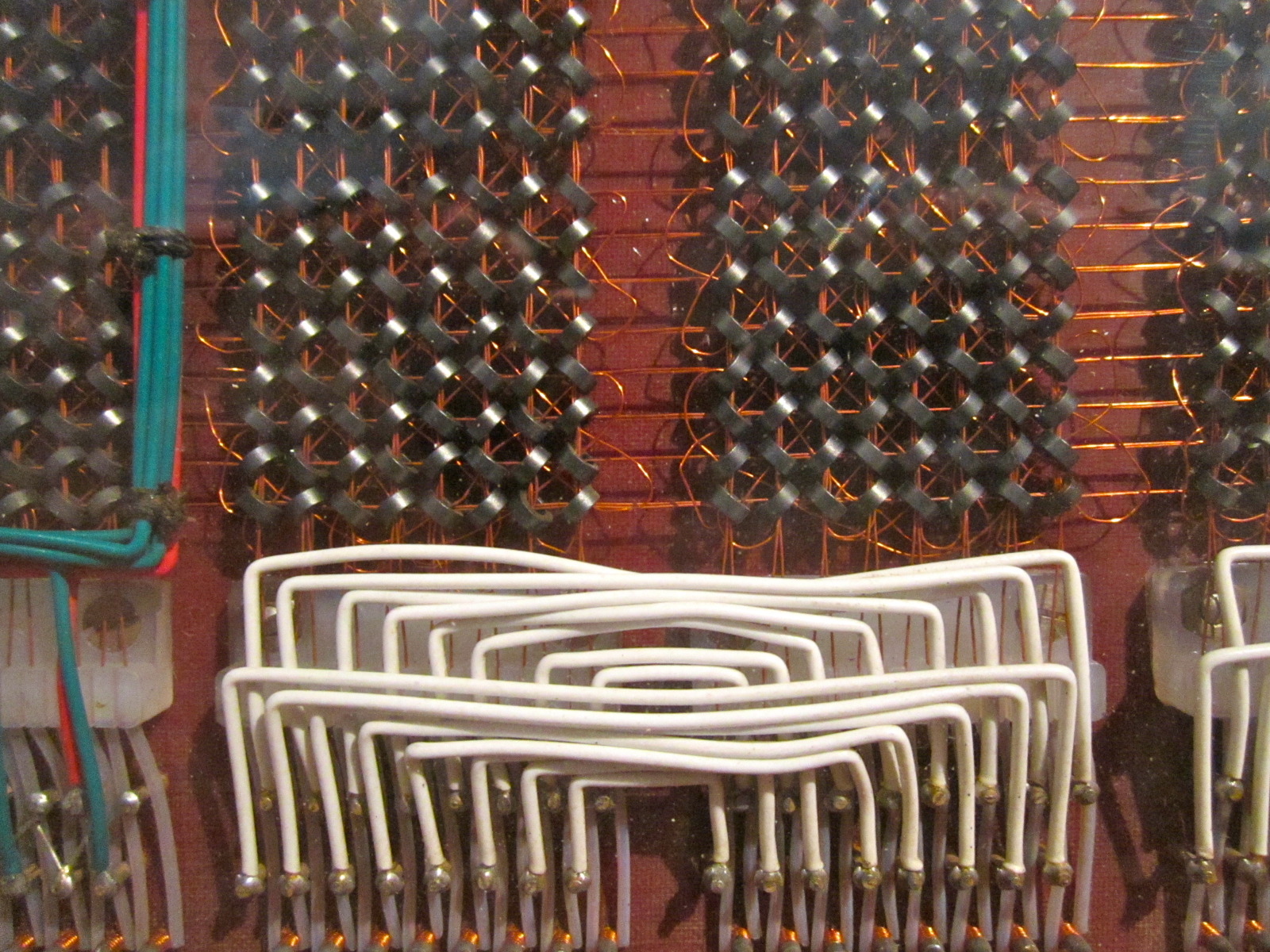

The new AI-RAN-ready infrastructure is built to handle the demands of next-generation AI workloads, including high-performance computing at the edge. This marks a shift from traditional cloud-based AI processing to distributed, low-latency solutions that can power applications like industrial automation, smart cities, and advanced robotics.

Enthusiasts vs. Everyday Users

The focus on physical AI opens up possibilities for developers working on cutting-edge projects, particularly in robotics and autonomous systems. For everyday users, the impact may be less immediate but equally significant over time, as these advancements could lead to more efficient infrastructure and smarter urban environments.

- Enthusiasts:

- High-performance edge computing for AI workloads

- Support for advanced robotics and autonomous systems

- Future-proofing for developers building next-gen applications

- Everyday Users:

- More efficient infrastructure in smart cities

- Improved reliability in network-dependent applications

- Long-term benefits from AI-driven optimizations

The collaboration leverages NVIDIA's expertise in AI and T-Mobile's 5G and edge computing capabilities to create a scalable, low-latency platform. This is not just about faster networks; it's about redefining how AI interacts with the physical world.

Engineering Tradeoffs

The new infrastructure introduces tradeoffs that developers will need to navigate. For example, balancing latency and computational efficiency is critical for real-time applications like robotics. The system must also handle the complexity of distributing AI workloads across edge devices without sacrificing performance.

One key aspect is the use of NVIDIA's AI-optimized platforms, which include GPUs, accelerators, and software stacks designed to work seamlessly with 5G and edge computing. This ensures that AI models can be deployed closer to where data is generated, reducing latency and improving response times.

However, the challenge lies in ensuring that these systems are both powerful enough for demanding applications and flexible enough to adapt to future needs. The partnership aims to address this by providing a robust foundation that can scale with advancements in AI and networking technologies.

The Road Ahead

What is confirmed is the commitment to building an AI-ready network infrastructure that prioritizes physical applications. The focus on edge computing and low-latency processing sets a clear path for developers and enterprises to innovate without being constrained by traditional cloud limitations.

What remains unconfirmed is how quickly these advancements will translate into tangible benefits for end-users. While the long-term vision is clear, the pace of adoption and the specific use cases that will emerge in the near future are still uncertain. This partnership could accelerate those developments, but only time will tell.

For now, the collaboration represents a significant step toward integrating AI more deeply into the physical world, with potential ripple effects across industries from robotics to smart infrastructure. The question is no longer whether AI will shape our networks, but how quickly and comprehensively it will do so.