Discord is tightening its age-restriction controls with a new system that will determine which servers require adult verification. The approach combines artificial intelligence with human oversight to identify and block access to specific communities—though the platform has clarified that game ratings alone won’t trigger these restrictions.

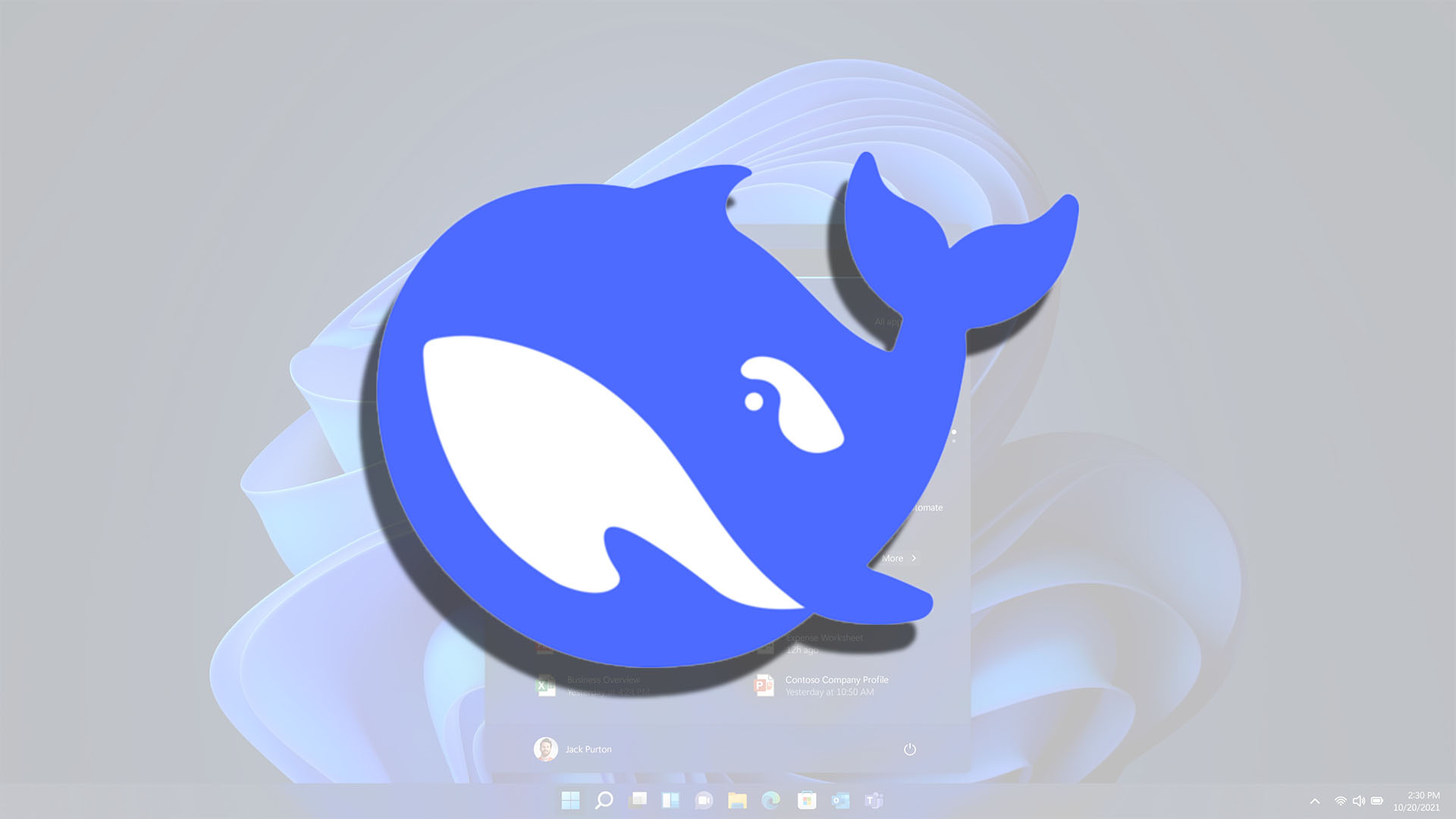

The rollout, set for March, follows Discord’s earlier introduction of facial recognition and ID checks for users opting out of a default teen-friendly experience. While some features—like stage channels—might seem niche, the broader impact includes limits on direct messaging, friend requests, and now entire server participation.

How will Discord decide which servers need age verification? A representative explained that the process involves both automated tools and manual reviews to proactively flag and restrict access. This means servers won’t be automatically blocked based on a game’s ESRB or PEGI rating, but rather through a combination of content analysis and community behavior monitoring.

Critics, however, question whether this system strikes the right balance between safety and privacy. The platform’s partnership with an age-verification vendor—one with ties to surveillance technology—has already raised eyebrows, particularly in regions like the UK where users may be subjected to experimental verification methods.

For those uncomfortable with Discord’s expanding data collection, alternatives like Matrix-based platforms or even legacy IRC networks remain options. But for now, the shift toward AI-driven moderation represents another layer in Discord’s evolving approach to user segmentation.