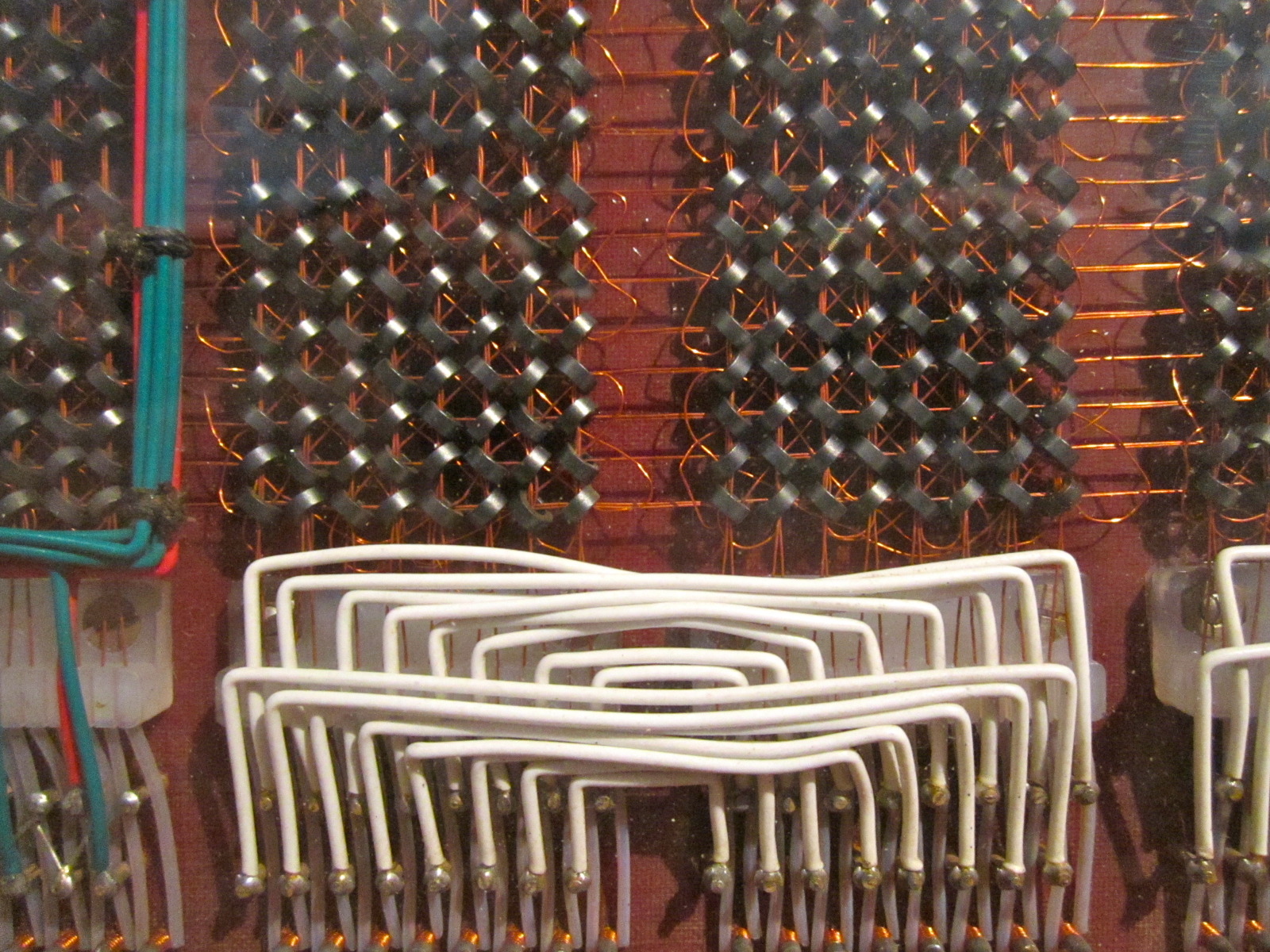

The rapid advancement of AI infrastructure is reshaping how organizations approach large-scale data processing. However, this progress comes with a growing concern: the balance between performance gains and the risks associated with vendor-specific ecosystems. Dell Technologies and NVIDIA are now addressing this challenge head-on, delivering new tools that promise to accelerate AI training and inference while raising questions about long-term flexibility.

The partnership introduces three key components: Dell’s exascale storage solution, an optimized data engine for AI workloads, and a high-speed file system codenamed Lightning. Together, they aim to reduce latency in large-scale AI operations—critical for creators working with massive datasets. However, the integration with NVIDIA’s platform suggests deeper dependency on its hardware and software stack, a tradeoff that could limit future upgrade paths.

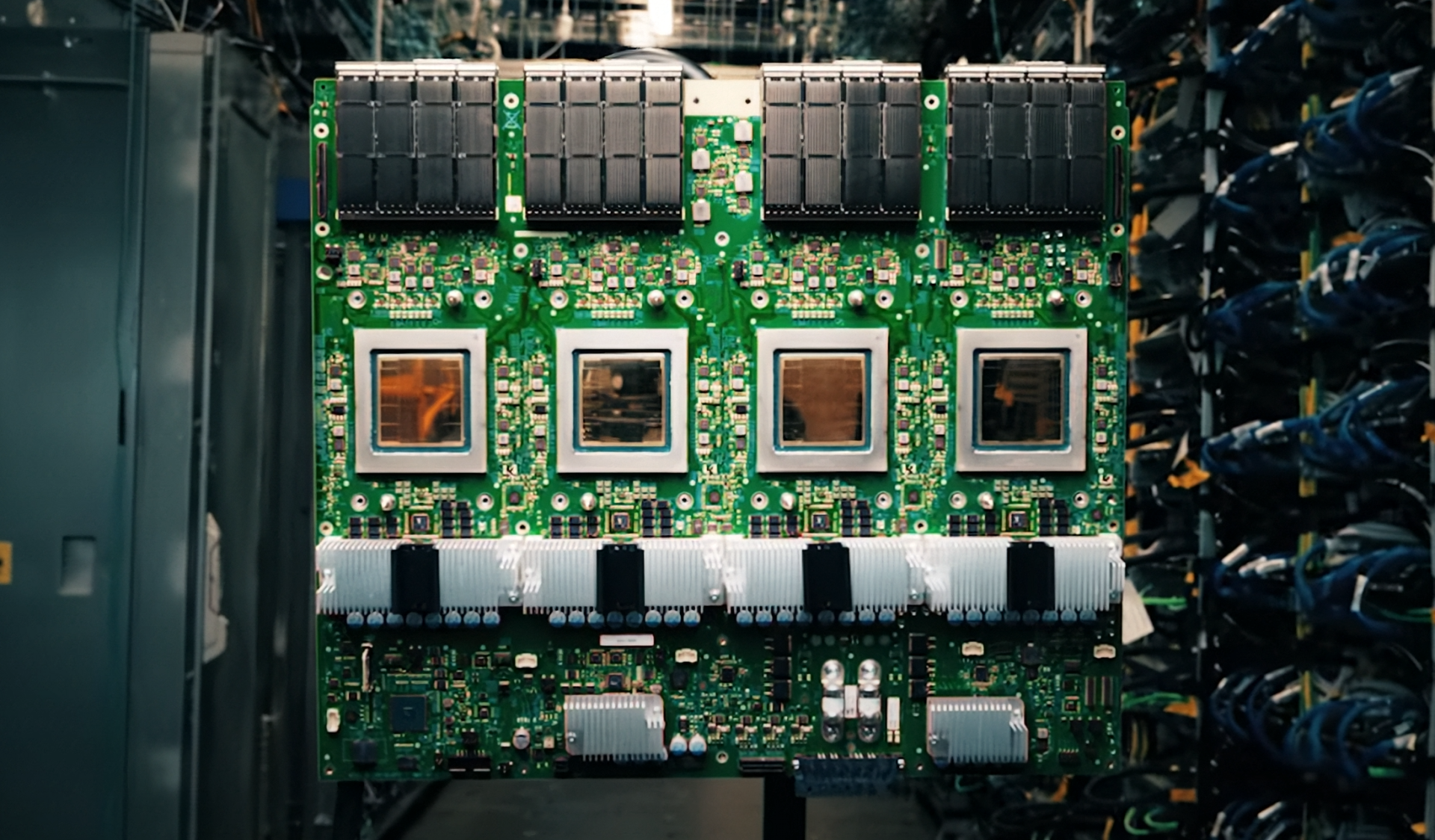

At the heart of the collaboration is Dell’s new exascale storage system, designed to handle petabytes of unstructured data at speeds previously unseen in enterprise environments. Benchmarks indicate throughput improvements of up to 40% compared to existing solutions, a significant leap for AI training pipelines where data movement bottlenecks are common. The system leverages NVIDIA’s latest GPUs and networking technologies, ensuring seamless integration with CUDA-accelerated workloads.

Complementing the storage is Dell’s Lightning file system, built to minimize I/O latency by up to 30% through predictive caching and adaptive data placement. While early tests show promising results in real-world AI scenarios—such as large language model training—the system’s performance hinges on its ability to scale without becoming a bottleneck itself. Creators relying on heterogeneous hardware may find the gains attractive, but the lock-in to NVIDIA’s ecosystem could become a long-term constraint.

Perhaps the most intriguing addition is Dell’s new data engine, optimized specifically for AI workloads. Unlike traditional storage engines, this component is designed to preprocess and structure data in ways that align with AI model requirements, potentially reducing the need for custom preprocessing scripts. However, its effectiveness depends on how well it adapts to non-NVIDIA hardware, a question that remains unanswered.

For creators working at the intersection of AI and large-scale data, these tools represent a compelling step forward. The exascale storage and Lightning file system address two persistent pain points: speed and scalability. But the deeper integration with NVIDIA’s platform introduces a critical consideration: whether the performance gains justify the potential for vendor lock-in.

Those who benefit most will likely be teams already deeply invested in NVIDIA’s ecosystem, particularly those working on large-scale AI projects where every millisecond of latency matters. For others, the tradeoff between cutting-edge performance and long-term flexibility may not yet be worth it—at least until alternatives emerge that offer similar speed without the same level of platform dependency.